Imagine the scenario – you start on a new feature or project and there is a need for dynamic content. Sounds simple right? Just pick a CMS platform, setup an account, update a bit of content, publish and you are done. Well, if only it was that simple! *

*Note – this post assumes that a platform like WordPress isn’t sufficient for your requirements

Where to start?

If you look at https://en.wikipedia.org/wiki/List_of_content_management_systems, it certainly won’t clear things up. There are a LOT of options! So, what sort of information should you use to feed into your decision process?

A few core CMS concepts

Before we go further, let’s define a few key concepts:

- Headless Content Management System (CMS) – “A headless CMS is a content management system that provides a way to author content, but instead of having your content coupled to a particular output (like web page rendering), it provides your content as data over an API.” https://www.sanity.io/blog/headless-cms-explained

- Digital Experience Platform (DXP) – “Gartner defines a digital experience platform (DXP) as an integrated set of technologies, based on a common platform, that provides a broad range of audiences with consistent, secure and personalized access to information and applications across many digital touchpoints.” https://www.gartner.com/reviews/market/digital-experience-platforms

It’s worth noting that certain vendors aim to fulfil both entries above, whereas others operate purely as headless, cloud native SAAS providers.

How to help you make a decision?

Ah, but what if the decision has already been made?

Within your team(s) or business(es), do you have an existing CMS? If so, can it be scaled or modified to serve your new needs. It’s worth considering that ‘scaled’ here covers many things – licensing, usability, modifiability, supportability, physical capacity and a raft more. This discussion often leads to some interesting outcomes and can easily expose issues, or the opposite, a positive view of existing tooling.

Ok, so we already successfully use CMS X

We’re getting warmer, but I’d suggest you still need to answer a few more questions:

- Is it fit for purpose?

- Do it’s content delivery approaches fit the needs of your new requirements?

- Will the team that use the system be the same as the existing editors?

How to select a new CMS?

I’d recommend you build up your own criteria for assessing different tools, here are a few thought starters:

- Cost

- What are the license fees, and how do they scale?

- Is it a consistent cost year by year?

- What if you need more editors?

- What if you need more content items, or media items?

- What if you need to serve more traffic?

- How much would a new environment cost?

- How much does it cost to run and maintain the system?

- What hosting costs will you incur?

- How much does a release cost?

- What cost lies with your different DR options?

- How will the infra receive security patches and software upgrades?

- What does an upgrade of the tool look like? Is it handled for you, or do you need to own an upgrade?

- Note. This has stung us hard in the past with certain vendors!

- How much effort/cost is required to set it up before you can focus on delivery of features to the customer?

- What are the license fees, and how do they scale?

- Features

- Does the tool support the features you require?

- Or, does the tool come with features you don’t require?

- This is an interesting point – are you buying a Ferrari when all you need is a Ford?

- Are your competitors using the same tool?

- Does it suit your business model?

- What multi-lingual requirements do you have?

- And how does that map to content and presentation?

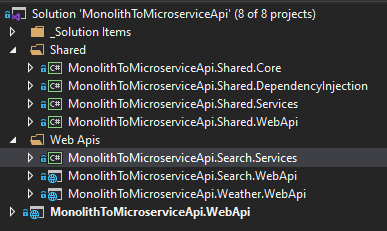

- Technology constraints

- Are there any technology restrictions imposed by the tools

- E.g. hosting options, language choices, CI/CD patterns, tooling constraints

- Who owns the hosted platform, and how do backups work?

- Does the location of data matter for your business?

- Are there any technology restrictions imposed by the tools

- Platform vs a tool

- This ties into the concepts above, do you want a DXP or a headless CMS

- Is a composable architecture desirable for your team(s)?

- Out the box vs bespoke

- What comes ‘for free’? And, do you even want the ‘free’ features?

- If we think of enterprise platforms such as Sitecore, you get a lot OTB for free. E.g. the concept of sites, pipelines, commands and many more.

- If you go down the headless route this lies in your dev teams hands.

- What comes ‘for free’? And, do you even want the ‘free’ features?

- Building a team

- Can you even build a team around tool X?

- Do you have in-house experience in the tool or associated tools?

- Support

- What if something goes wrong, what support can you get?

- Note, I’d see support running from before you sign the contracts all the way through to post live ongoing support

- What if something goes wrong, what support can you get?

- Scalability, performance and common NFR’s

- Will the tool scale and perform to your requirements?

It’s worth noting, this is not meant to be an exhaustive list – every project will have different requirements and metrics that get prioritized. The goal is to provide some thought starters in areas we’ve found useful in the past.

Finally, the fun part – rolling it out

Well, almost. Now the fun / hard part (omit for your preference :)).

You have your new tool, but how does it map to the business? How will the editors get on with it? What does multi-lingual design look like? What technology do you use to build the front ends? Where to start? What is the meaning of life?

Maybe that’s content for another blog post…

Happy editing!